Why GPT Image 2 Images Feel More Useful for Creators

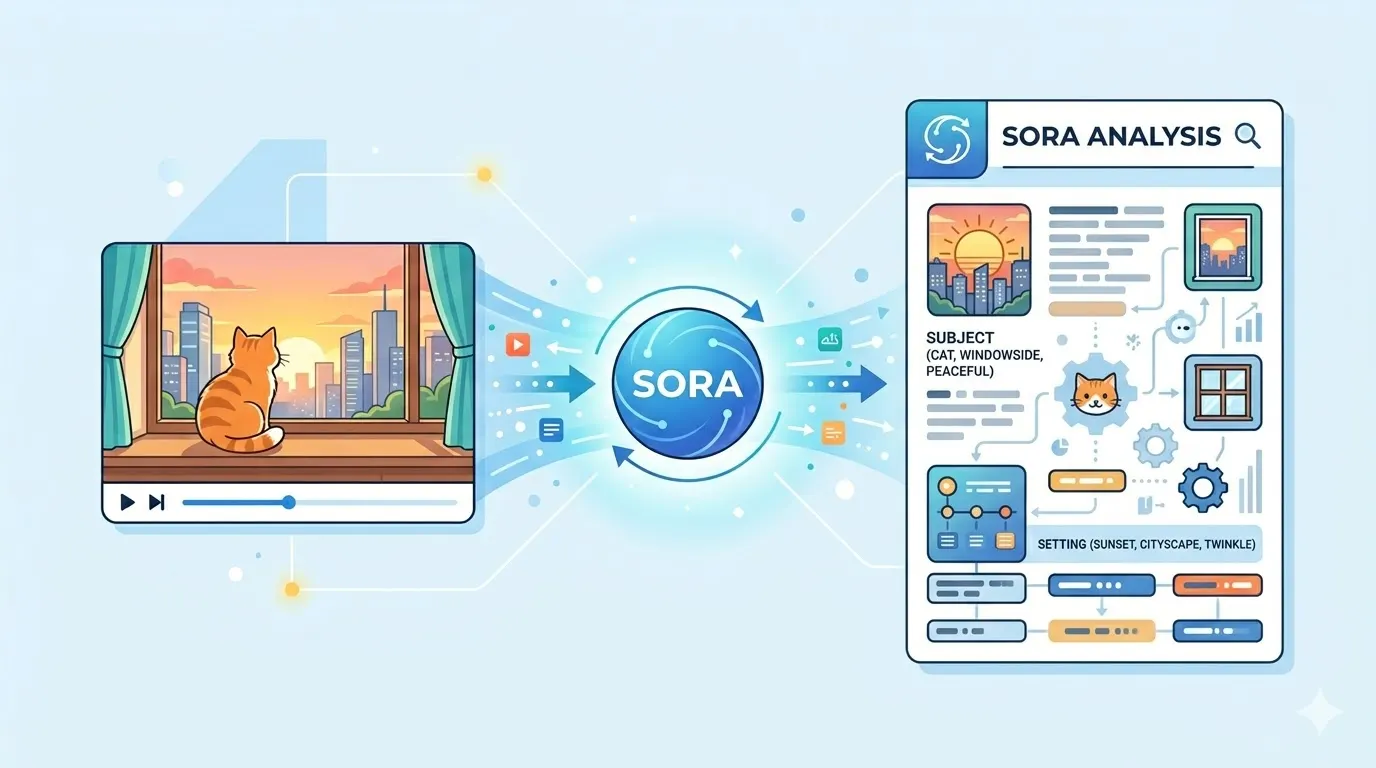

GPT Image 2 is getting attention because its images feel less like experiments and more like assets creators can actually use. It is not just about sharper details or prettier styles. The real upgrade is practical: clearer text, cleaner layouts, more consistent characters, polished product visuals, and stronger first frames for AI videos. For creators, that matters. A good AI image should not only look impressive for five seconds. It should be useful enough for a blog cover, thumbnail, social post, ad concept, or visual story. So what actually feels different in GPT Image 2? Let’s look at where it improves — and where it still feels like AI. Why GPT Image 2 Feels Different From Older AI Image Models Older AI image models could look impressive at first glance, but the flaws showed up quickly: broken text, messy layouts, inconsistent characters, and polished visuals that still felt artificial. GPT Image 2 feels different because it handles the practical side of image generation better. Posters look more readable, products are clearer, characters stay more recognizable, and visuals feel more purposeful. That is why creators are paying attention — it does not just make prettier images, but more usable ones. The Image Effects People Notice Most GPT Image 2 feels different because its improvements show up in places creators actually use. The results are not just prettier; they are easier to turn into thumbnails, covers, product visuals, story assets, and first frames for videos. Text in Images Looks Much More Readable Text is one of the clearest improvements. Older AI image models could create a strong poster background, then ruin it with broken letters, fake words, or unreadable symbols. That made the image hard to use for thumbnails, ads, product labels, menus, and social posts. GPT Image 2 handles short text better. Titles look cleaner, labels are easier to read, and simple poster copy feels more intentional. This matters because creator visuals often depend on just a few clear words: a YouTube thumbnail needs a hook, a TikTok cover needs a bold phrase, and a product mockup needs a label that does not look broken. Still, it is not perfect. Long text, prices, dates, brand names, small disclaimers, and non-English copy still need manual checking. Posters and Covers Feel More Designed GPT Image 2 also makes posters, covers, and promotional visuals feel more complete. Instead of placing random text over a nice background, it often creates a clearer relationship between the subject, title, spacing, lighting, and background. That makes it useful for blog covers, YouTube thumbnails, TikTok covers, product ads, campaign images, and social graphics. The key word is direction. GPT Image 2 can quickly help you explore a visual idea, but it does not replace real design files. A generated poster is still a flat image, not a layered Figma or Photoshop file. Characters Stay More Consistent Character consistency is another effect creators care about. If you are making a story, comic, mascot, or AI video, one good image is not enough. The character needs to stay recognizable across scenes. GPT Image 2 seems better at keeping the face, outfit, colors, and general style connected. This is useful for character references, storyboards, expression variations, and AI video first frames. A stronger first frame gives image-to-video tools a better starting point. Realistic Images Look More Polished GPT Image 2 can create clean, polished realistic images. Portraits, product mockups, lifestyle scenes, studio shots, and commercial visuals often look more refined and closer to usable brand material. But polished does not always mean natural. Some images still look too smooth, too controlled, or slightly artificial. For creators, the goal is not just to make an image look expensive. It should also feel believable. Structured Images Are More Useful One of the most useful changes is how GPT Image 2 handles structured visuals. These are images that explain something, such as comics, diagrams, product explainers, step-by-step graphics, maps, or before-and-after images. This matters because many creator visuals need to communicate quickly. GPT Image 2 seems better at organizing panels, labels, titles, and sections, but facts, numbers, and instructions still need review before publishing. Where GPT Image 2 Still Feels Like AI GPT Image 2 is more useful than older AI image models, but it still has limits. The problems usually appear when the image needs exact text, natural realism, or a less polished everyday look. Long Text Can Still Go Wrong Short titles and labels are much better, but long text is still risky. A poster with one bold headline may look clean, while a detailed infographic, product description, or paragraph can still include small mistakes. This matters for ads, product visuals, tutorials, and educational graphics. If the words are important, they should always be checked manually. Non-English Text Still Needs Checking Non-English text has improved, but it is not fully reliable. Chinese, Japanese, Korean, Arabic, and other languages may look visually convincing, but some characters or words can still be wrong. For multilingual creators, GPT Image 2 is useful for quick concepts, but final publishing still needs native-language review. Nature Scenes Can Look Too Synthetic Nature is harder than it looks. GPT Image 2 can create beautiful landscapes, but trees, clouds, mountains, grass, water, and sunlight may feel too sharp or too controlled. Sometimes every part of the image looks equally detailed, which makes the scene feel less natural. The result can be beautiful, but not always believable. Some Images Are Too Perfect Many GPT Image 2 images look clean, polished, and high-end. That works well for product concepts or commercial visuals, but it can feel fake for everyday content. Real photos often have small imperfections: uneven lighting, messy backgrounds, imperfect skin, or casual framing. If you want a more authentic result, ask for natural lighting, realistic imperfections, less polished textures, or casual photography instead of a luxury ad look. How to Use GPT Image 2 for Free You can use GPT Image 2 directly in ChatGPT. After the update, some users

Professional Results with Grok Videos

This technology transformed our production workflow completely, delivering quality that matches premium commercial tools. The grok AI videos we create now surpass what we achieved with expensive software. Hours of manual editing work are saved through intelligent automation and batch processing capabilities available through this powerful platform.

Outstanding Download Experience

The grok AI video quality exceeds expectations for every project we undertake. Clean exports without watermarks or restrictions arrive ready for immediate distribution. Files meet professional quality standards that exceed expectations for a free service consistently. Our team relies on this platform for all video production needs.

Seamless Workflow Integration

Accessing grok AI free capabilities transformed our content strategy entirely. Integrating these capabilities into our existing workflow was straightforward and well-documented. The platform supports various development environments for quick implementation. Our team now relies on this solution for daily production tasks.

Intuitive User Interface

The grok video AI interface is intuitive enough for beginners while offering depth for advanced users. User experience was clearly prioritized during design, requiring no technical expertise for basic operations. Tutorials helped me create professional content immediately after signing up for the first time.

Exceptional Spicy Mode Quality

The grok spicy mode output quality impressed our entire creative team during initial evaluation. Consistent results across varied inputs demonstrate robust underlying architecture. Challenging scenes are handled effectively, making this our standard creation tool for client projects now.

Reliable Performance Under Load

The grok generator performs reliably under heavy usage during peak production periods. Quality maintains consistency during high demand times while uptime exceeds competing services. Infrastructure supports professional production schedules for agencies and enterprises consistently.

Professional Results with Grok Videos

This technology transformed our production workflow completely, delivering quality that matches premium commercial tools. The grok AI videos we create now surpass what we achieved with expensive software. Hours of manual editing work are saved through intelligent automation and batch processing capabilities available through this powerful platform.

Outstanding Download Experience

The grok AI video quality exceeds expectations for every project we undertake. Clean exports without watermarks or restrictions arrive ready for immediate distribution. Files meet professional quality standards that exceed expectations for a free service consistently. Our team relies on this platform for all video production needs.

Seamless Workflow Integration

Accessing grok AI free capabilities transformed our content strategy entirely. Integrating these capabilities into our existing workflow was straightforward and well-documented. The platform supports various development environments for quick implementation. Our team now relies on this solution for daily production tasks.

Intuitive User Interface

The grok video AI interface is intuitive enough for beginners while offering depth for advanced users. User experience was clearly prioritized during design, requiring no technical expertise for basic operations. Tutorials helped me create professional content immediately after signing up for the first time.

Exceptional Spicy Mode Quality

The grok spicy mode output quality impressed our entire creative team during initial evaluation. Consistent results across varied inputs demonstrate robust underlying architecture. Challenging scenes are handled effectively, making this our standard creation tool for client projects now.

Reliable Performance Under Load

The grok generator performs reliably under heavy usage during peak production periods. Quality maintains consistency during high demand times while uptime exceeds competing services. Infrastructure supports professional production schedules for agencies and enterprises consistently.