With dozens of AI video generators flooding the market — each claiming to be the best — creators and marketers face a real challenge. Which tool actually delivers the best visual quality? Which one fits your specific workflow? And which claims are hype versus substance?

This guide breaks down exactly what HappyHorse 1.0 is, what makes it stand out, where it fits into real-world workflows, and how it compares head-to-head against 10 leading AI video tools in a single, comprehensive comparison table.

What Is HappyHorse 1.0?

HappyHorse 1.0 is an AI video generation model that claimed the top position on the Artificial Analysis global AI video leaderboard — the most widely referenced independent benchmark for AI video quality.

Unlike models that launch with fanfare from well-known labs, HappyHorse appeared anonymously and let its output speak first.

It supports both text-to-video and image-to-video generation, producing native 1080p video with synchronized audio in a single pass.

The Origin Story — From Mystery Model to #1

HappyHorse 1.0 first appeared as an anonymous entry on the Artificial Analysis Video Arena, a platform where real users vote in blind A/B comparisons between AI-generated videos. Without any branding or marketing, the model earned the #1 Elo ranking in both text-to-video (1333 Elo) and image-to-video (1392 Elo) categories.

Core Technical Specs at a Glance

Under the hood, HappyHorse 1.0 is built on a 15B-parameter single-stream Transformer architecture (claimed but not independently verified at the parameter level). Here are the key specs:

● Architecture: Single-stream Transformer with self-attention (Transfusion-style)

● Inference: 8-step DMD-2 distillation — significantly fewer denoising steps than most competitors

● Output: Native 1080p resolution at 24fps, with multiple aspect ratios

● Audio: Joint video and audio generation in a single pass

● Lip-sync: Multilingual support across 6 languages

● Inference mode: CFG-less (classifier-free guidance not required), reducing compute overhead

● Clip duration: Up to 5 seconds per generation

Key Advantages of HappyHorse 1.0

What sets HappyHorse apart isn’t just one feature — it’s a combination of capabilities that no single competitor currently matches. Here’s what matters most for creators evaluating their options.

#1 Leaderboard Ranking — Verified by Blind User Votes

Many AI tools claim to be “the best” based on internal benchmarks or cherry-picked samples.

HappyHorse’s ranking is different. The Artificial Analysis Video Arena uses blind A/B comparisons — real users watch two AI-generated videos side by side without knowing which model made which, then vote for the one they prefer. This produces an Elo rating (the same system used to rank chess players) that reflects genuine human preference.

HappyHorse 1.0 achieved 1333 Elo in text-to-video and 1392 in image-to-video (without audio), placing it above Seedance 2.0, Kling 3.0, Veo 3, and every other model in the arena.

Joint Video and Audio Generation

Most AI video generators produce silent video. Want sound effects or voiceover? You need a separate tool — adding time, cost, and complexity.

HappyHorse 1.0 generates synchronized audio alongside video in a single pass, including ambient sound effects, environmental audio, and voice. For creators on platforms where audio is essential (TikTok, Reels, YouTube Shorts), this eliminates an entire production step.

Only a few competitors offer native audio — notably Seedance 2.0 (which leads in with-audio Elo rankings) and Veo 3. But HappyHorse combines top-tier visual quality with audio in a way most tools cannot.

Multilingual Lip-Sync Across 6 Languages

Built-in lip-sync capability supporting multiple languages makes HappyHorse particularly valuable for global content creators. Instead of shooting separate versions or manually dubbing content for different markets, you can generate localized video with natural-looking lip movements directly.

This is especially relevant for:

● Marketing teams running campaigns across multiple regions

● E-commerce sellers creating product videos for international platforms

● Educational content creators producing multilingual explainer videos

No manual dubbing. No third-party lip-sync tools. It’s built into the model.

Open Source Promise — Local Deployment Potential

One of the most discussed aspects of HappyHorse 1.0 is its planned open-weight release. According to

community sources and developer discussions, the team intends to release:

● The base model weights

● A distilled version for faster inference

● Super-resolution model weights

● Inference code for local deployment

Important caveat: As of this writing, the weights have not been publicly released. The HuggingFace repository remains empty, and the GitHub repo (brooks376/Happy-Horse-1.0) has been flagged by the community as unofficial. Verify through official channels before trusting any download links.

Efficient 8-Step Inference

Speed matters when you’re generating video at scale. HappyHorse uses DMD-2 distillation to achieve generation in just 8 denoising steps — far fewer than the 25-50 steps many competitors require.

Fewer steps means:

● Faster generation per clip

● Lower compute costs per video

● More practical for batch content creation

This efficiency doesn’t come at the cost of quality — the Elo rankings confirm that HappyHorse’s 8-step output still surpasses models running significantly more inference steps.

HappyHorse 1.0 vs 10 AI Video Generators — Full Comparison Table

This is the section you’ll want to bookmark. Below is a comprehensive side-by-side comparison of HappyHorse 1.0 against 10 leading AI video generation tools, covering the dimensions that matter most when choosing a tool for your workflow.

Comparison Criteria Explained

Before diving into the table, here’s what each column measures:

● Video Quality Ranking: Elo score from Artificial Analysis blind comparisons (where available), or relative benchmark positioning

● Max Resolution: Highest native output resolution supported

● Max Duration: Longest single clip the model can generate

● Audio Support: Whether the model generates audio natively alongside video

● Open Source: Whether model weights are available for local deployment

● Pricing Model: How you pay — free credits, subscription, per-generation, or API-based

● Best Use Case: The scenario where each tool has the strongest competitive advantage

The 10-App Comparison Table

| # | Model | Developer | Quality Ranking | Max Resolution | Max Duration | Audio | Open Source | Pricing | Best Use Case |

| 1 | HappyHorse 1.0 | Alibaba Taotian | #1 Elo (1333 T2V / 1392 I2V) | 1080p | 5s | ✅ Native | Planned (open weights) | Free credits; ~$1/5s clip | Top visual quality + audio |

| 2 | Seedance 2.0 | ByteDance | Former #1; leads with-audio | 720p | 15s | ✅ Via Dreamina | ❌ Closed | $1–3/gen | Longer clips with audio |

| 3 | Kling 3.0 | Kuaishou | Top-tier visual quality | 1080p | 10s | ❌ No | ❌ Closed | Freemium | High-quality cinematic clips |

| 4 | Veo 3 | Google DeepMind | High (benchmark leader) | 4K upscale | 8s | ✅ Native | ❌ Closed | Via Vertex AI | Enterprise-grade resolution |

| 5 | Wan 2.2 | Alibaba Tongyi | Solid mid-tier | 720p | 5s | ❌ No | ✅ Open weights | Free | Open-source baseline |

| 6 | LTX 2.3 | Lightricks | Mid-tier; fast inference | 720p | 5s | ❌ No | ✅ Open source | Free | Fast local generation |

| 7 | Runway Gen-4 | Runway | Industry standard | 4K | 10s | ❌ No | ❌ Closed | Subscription ($12+/mo) | Professional production |

| 8 | Pika 2.0 | Pika Labs | Creative effects leader | 1080p | 4s | ❌ No | ❌ Closed | Freemium | Stylized effects & motion |

| 9 | Sora | OpenAI | Strong T2V quality | 1080p | 20s | ❌ No | ❌ Closed | ChatGPT Plus ($20/mo) | Long-form text-to-video |

| 10 | PixVerse C1 | PixVerse | Character consistency focus | 1080p | 5s | ❌ No | ❌ Closed | Freemium | Consistent character videos |

| 11 | Minimax / Hailuo | MiniMax | Strong quality; audio capable | 720p | 6s | ✅ Native | ❌ Closed | Freemium | Audio-synced short clips |

Key Takeaways From the Comparison

Several patterns stand out:

● HappyHorse leads in verified quality — the only model holding #1 Elo in both T2V and I2V based on blind user preference.

● HappyHorse is the only top-tier model with a credible open-source roadmap — Wan 2.2 and LTX 2.3 are open but rank lower. Every other top-5 model is closed.

● Seedance 2.0 wins on duration and audio — 15 seconds per clip with strong audio, but at $1–3 per generation, costs add up.

● Veo 3 and Runway lead on resolution — 4K output at enterprise-level pricing.

How to Get Started With HappyHorse 1.0

Ready to try it yourself? Here are the practical paths to accessing HappyHorse 1.0 right now — addressing the biggest barrier the community has identified: figuring out where and how to actually use it.

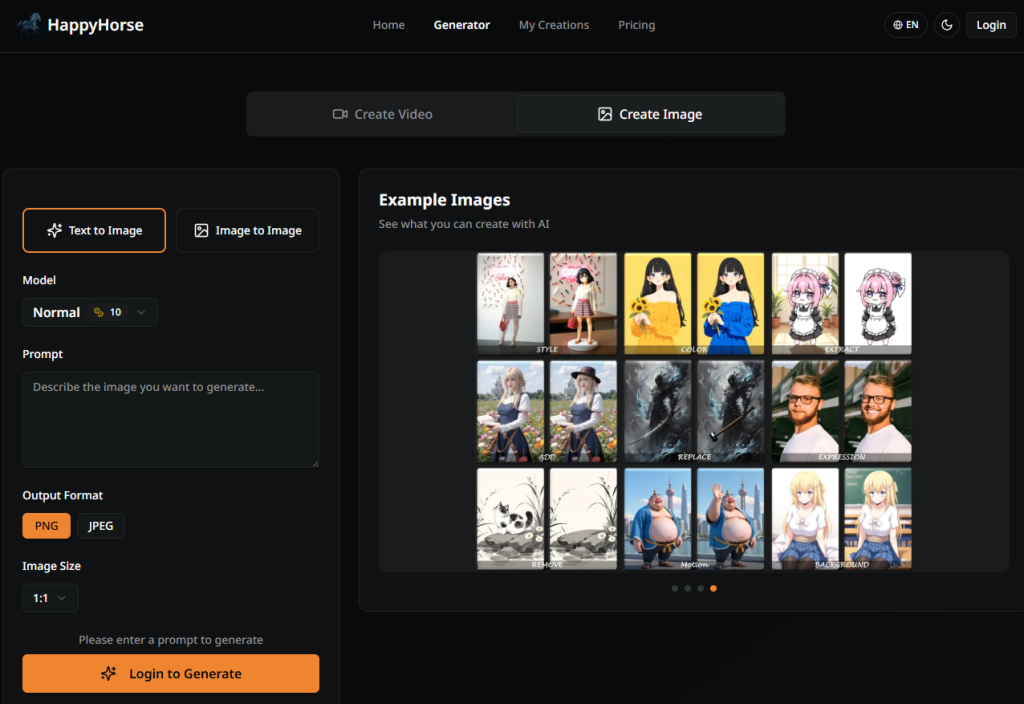

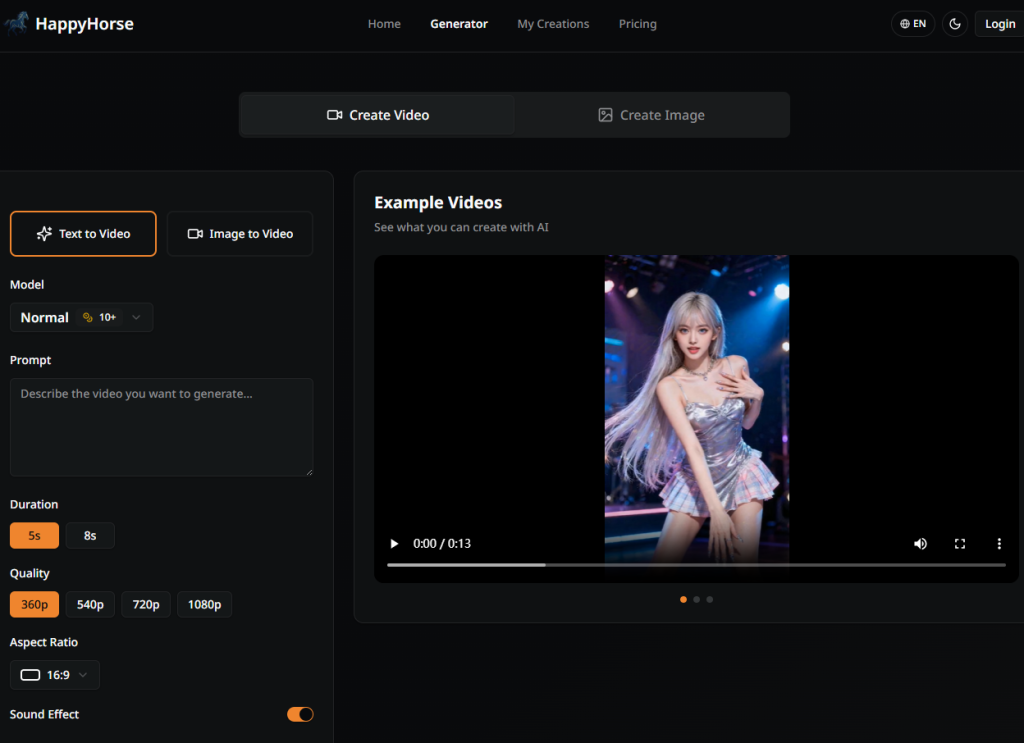

Access via the Official Demo Site

The most straightforward path is the official demo at happyhorse.video:

- Create an account and claim your free credits

- Choose your mode: text-to-video or image-to-video

- Enter your prompt or upload a reference image

- Configure settings: aspect ratio, duration, audio preference

- Generate and download your clip

Free credits let you evaluate quality before committing any money.

What to Watch Out For

No tool is perfect. Here’s what you should know before committing to HappyHorse 1.0.

5-Second Clip Duration Limit

HappyHorse currently generates a maximum of 5 seconds per clip. In a market where Seedance 2.0 offers 15 seconds, Sora offers 20, and Runway offers 10, this is a meaningful limitation.

Workarounds:

● Generate multiple 5-second clips and stitch them together in an editor

● Use HappyHorse for hero shots and key moments, then fill longer sequences with other tools

● For short-form social content (TikTok, Reels), 5 seconds often covers a complete scene

Open Source Status — Verified vs. Claimed

The open-source promise is one of HappyHorse’s biggest selling points — but it’s important to distinguish what’s confirmed from what’s claimed:

| Status | Detail |

| ✅ Confirmed | #1 Elo ranking on Artificial Analysis (independently verified) |

| ✅ Confirmed | Joint video + audio generation capability |

| ✅ Confirmed | Available via official demo site (happyhorse.video) |

| ⚠️ Claimed | 15B-parameter architecture |

| ⚠️ Claimed | Fully open-weight release (base + distilled + super-res + inference code) |

| ⚠️ Claimed | Connection to Alibaba’s Taotian Group |

| ❌ Not yet available | Public model weights on HuggingFace or GitHub |

Until weights are publicly available and independently verified, treat open-source claims with cautious optimism.

Scam Domains and Impersonation Sites

The hype around HappyHorse has attracted scam websites capitalizing on the name. Reddit users have flagged multiple domains impersonating the official project.

To stay safe:

● Only use the official demo site at happyhorse.video

● Verify any download links through Artificial Analysis or official developer channels

● Be skeptical of GitHub repositories claiming to host weights — the community has flagged unofficial repos

● Never enter payment information on unverified sites

Conclusion

HappyHorse 1.0 has established itself as the top-ranked AI video generation model by verified user preference, with a unique combination of joint audio-video generation, multilingual lip-sync, and an open-source roadmap that no competitor currently matches.

Is it perfect? No. The 5-second clip limit is real, the open-source promise remains undelivered, and the team’s identity is still not officially confirmed. But the quality speaks for itself — independently verified through thousands of blind comparisons.

Ready to try it? Start with the official demo at happyhorse.video to test the quality firsthand.